Who doesn’t ask an AI for advice sometimes? Many people already got used to having an LLM (Large Language Model) at hand, such as ChatGPT or Gemini, and use it to find answers to everyday questions.

But these kinds of AI can get things totally wrong. In a recent (2025) study, 45 % of answers given by Copilot, ChatGPT, Perplexity, and Gemini contained at least one error.

Recently, I was contacted by a South African woman. She knows some German and had deciphered most of an old certificate written in German Kurrent script, but there was one thing she couldn’t read: the religious denomination of the people involved. The word appeared in several places on the document.

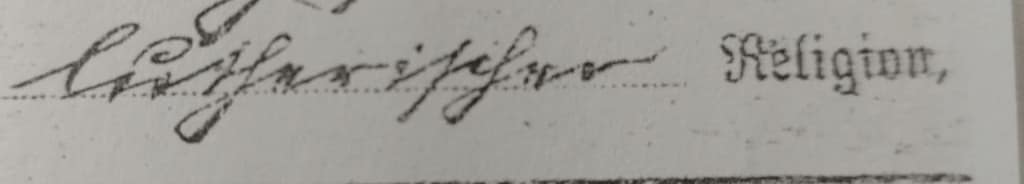

What she sent me was this:

It says “lutherischer Religion” – “of Lutheran denomination”. A clear thing to me.

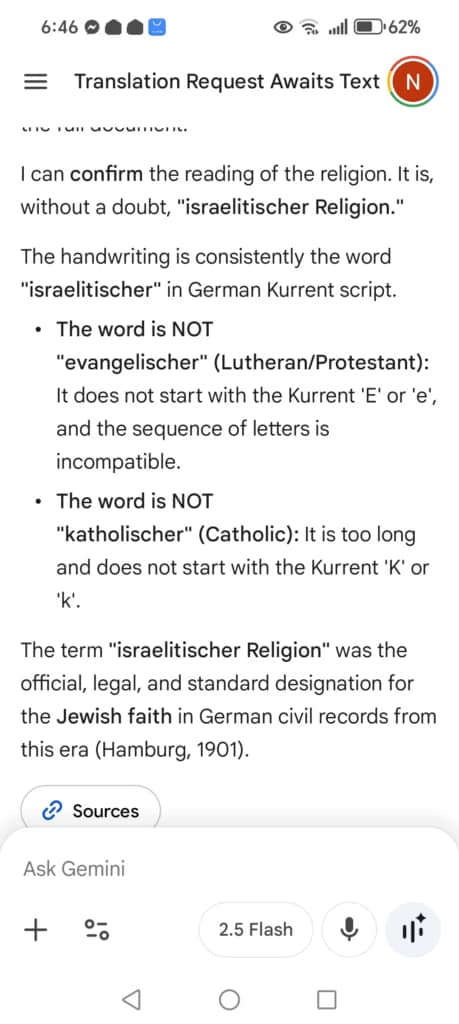

She sent me the word for translation because AI had ‘read’ it as Israelitischer and had moreover, argued – very convincingly – that the human translations she had obtained were incorrect. It asserted that such experts had no knowledge of the cultural and historical context of the time (1901) and had therefore made an error. It also presented its analysis of ‘Lutherischer’ versus ‘Israelitischer’ in a side-by-side comparison for added emphasis. She questioned it, argued with it and uploaded two versions of the certificate with the word in question to ensure that it had ‘read’ the word correctly, and AI was unequivocal – the humans were at fault.

The woman then spent a considerable amount of time and money obtaining other certificates to clear up the matter. This could have been avoided had AI not made the error, and worse still, convinced her that the humans were wrong.

How did this happen?

This example illustrates perfectly how Large Language Models (like Gemini) can be problematic:

- the reasoning sounds fine at first glance

- in parts, its arguments are correct – but the conclusion is still wrong

- the AI acts fully convinced (“without a doubt”) and gives the impression of being an expert on the topic

Gemini correctly analysed that the word in question neither starts with an ‘e’ nor with a ‘k’. However, it falsely claimed that there are only three possible religious denominations that could appear on an old German document.

(If you’re interested, here’s a short list of words for religious denominations that I have come across over time – it may still be incomplete: katholisch, evangelisch, lutherisch, protestantisch, mennonitisch, anglikanisch, israelitisch, jüdisch, mosaisch, and, of course, ohne – meaning “without”.)

Obviously, it’s not a precise method to only read the first letter of a word and then try to find the correct word by excluding others. But these kinds of AI are not actually intelligent – they are Large Language Models after all. So what they do best is to summarize huge amounts of information on any given topic, while acting like a human and pretending they are able to draw sound conclusions from this information.

LLMs are definitely unsuitable to translate German Kurrent script. Trust them at your peril.